Day 6 -- Chat History¶

Your trip planner generates great itineraries, but every call starts from scratch. Real users iterate -- "make it cheaper," "add a beach day," "what about hotels near the train station." Today you add conversation memory so the planner remembers what you have discussed.

What we're building today¶

graph LR

U[User] -->|"POST /plan"| GW[gateway]

GW -->|"trip_planning"| PL[planner-agent]

PL -->|"chat_history"| CH[chat-history-agent]

PL -->|"+claude"| CP[claude-provider]

PL -.->|failover| OP[openai-provider]

PL ==>|tier-1| UPA[user-prefs-agent]

CP -.->|tier-2| FA[flight-agent]

CP -.->|tier-2| HA[hotel-agent]

CP -.->|tier-2| WA[weather-agent]

CP -.->|tier-2| PA[poi-agent]

FA -->|depends on| UPA

PA -->|depends on| WA

style U fill:#555,color:#fff

style GW fill:#e67e22,color:#fff

style CH fill:#1abc9c,color:#fff

style PL fill:#9b59b6,color:#fff

style CP fill:#9b59b6,color:#fff

style OP fill:#9b59b6,color:#fff

style FA fill:#4a9eff,color:#fff

style PA fill:#4a9eff,color:#fff

style UPA fill:#1a8a4a,color:#fff

style WA fill:#1a8a4a,color:#fff

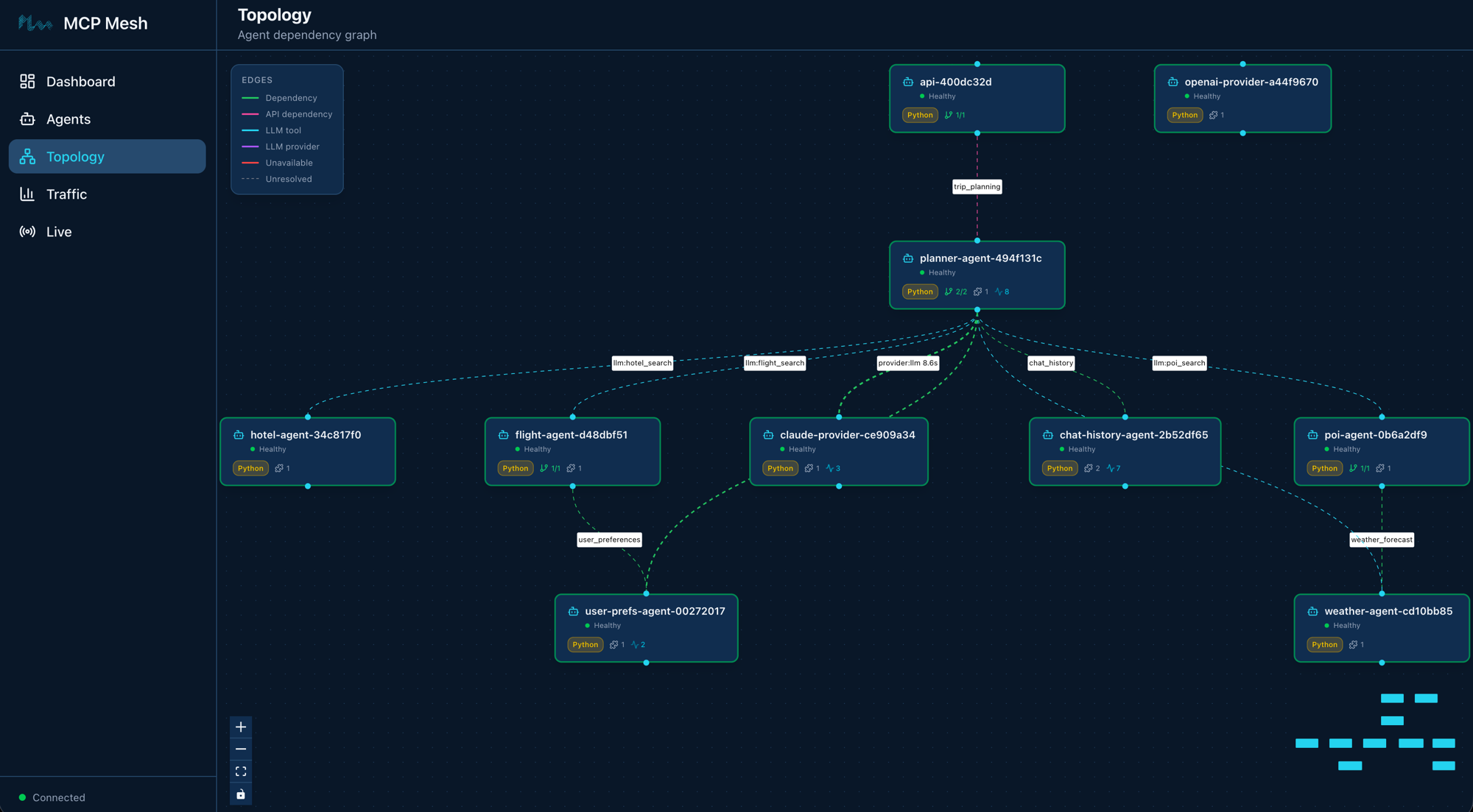

style HA fill:#1a8a4a,color:#fffTen agents. Everything from Day 5 plus chat-history-agent in teal. The planner fetches prior turns from chat history before calling the LLM, and saves both the user message and the response afterward. The gateway stays thin -- it just passes the session ID through.

Today has four parts:

- Build the chat history agent -- a tool agent backed by Redis

- Update the planner -- add history fetch and save around the LLM call

- Update the gateway -- add session ID passthrough

- Walk the trace -- see history calls in the distributed trace

Part 1: Build the chat history agent¶

Chat history is just another mesh tool agent. The same dependency injection that wires flight-agent wires chat-history-agent. There is no special framework primitive for state -- you write an agent that wraps a data store, and other agents call it like any other tool.

Scaffold the agent¶

Created agent 'chat-history-agent' in chat-history-agent/

Generated files:

chat-history-agent/

├── .dockerignore

├── Dockerfile

├── README.md

├── __init__.py

├── __main__.py

├── helm-values.yaml

├── main.py

└── requirements.txt

Add Redis to requirements¶

The agent needs redis-py to talk to the Redis instance from your observability stack (Day 3's docker-compose.observability.yml already runs Redis on port 6379):

# chat-history-agent dependencies

# Add your third-party dependencies here

# Note: mcp-mesh is provided by the runtime environment (local venv or Docker base image)

Replace main.py¶

Replace the generated main.py with:

import json

import os

import mesh

import redis

from fastmcp import FastMCP

app = FastMCP("Chat History Agent")

redis_client = redis.Redis(

host=os.getenv("REDIS_HOST", "localhost"),

port=int(os.getenv("REDIS_PORT", "6379")),

decode_responses=True,

)

@app.tool()

@mesh.tool(

capability="chat_history",

description="Save a conversation turn to Redis",

tags=["chat", "history", "state"],

)

async def save_turn(session_id: str, role: str, content: str) -> dict:

"""Save a single conversation turn (user or assistant message)."""

turn = json.dumps({"role": role, "content": content})

redis_client.rpush(f"chat:{session_id}", turn)

length = redis_client.llen(f"chat:{session_id}")

return {"session_id": session_id, "role": role, "saved": True, "total_turns": length}

@app.tool()

@mesh.tool(

capability="chat_history",

description="Retrieve recent conversation turns from Redis",

tags=["chat", "history", "state"],

)

async def get_history(session_id: str, limit: int = 20) -> list[dict]:

"""Retrieve the most recent turns for a session."""

raw = redis_client.lrange(f"chat:{session_id}", -limit, -1)

return [json.loads(entry) for entry in raw]

@mesh.agent(

name="chat-history-agent",

version="1.0.0",

description="TripPlanner Redis-backed chat history (Day 6)",

http_port=9109,

enable_http=True,

auto_run=True,

)

class ChatHistoryAgent:

pass

Two tools, one capability. save_turn appends a JSON-encoded turn to a Redis list keyed by session ID. get_history reads the most recent turns from that list. Both tools share the chat_history capability -- when the planner declares a dependency on chat_history, mesh injects a proxy that can call either tool by name.

The Redis connection is straightforward: a module-level redis.Redis client pointed at localhost:6379 (configurable via environment variables for Docker/Kubernetes deployment).

redis_client = redis.Redis(

host=os.getenv("REDIS_HOST", "localhost"),

port=int(os.getenv("REDIS_PORT", "6379")),

decode_responses=True,

)

Why this works¶

Swap Redis for Postgres by editing one agent. Add encryption by extending one agent. The gateway and planner do not move. mesh does not need a chat history primitive -- the general abstraction (any MCP tool anywhere is a local function call) handles it.

Part 2: Update the planner¶

The planner gains chat history as a tier-1 dependency alongside user preferences. It fetches history before the LLM call and saves turns after. The gateway stays thin -- it just passes the session ID.

import mesh

from fastmcp import FastMCP

from mesh import MeshContextModel

from pydantic import Field

app = FastMCP("Planner Agent")

class TripRequest(MeshContextModel):

"""Context model for the trip planning prompt template."""

destination: str = Field(..., description="Travel destination city")

dates: str = Field(..., description="Travel dates (e.g. June 1-5, 2026)")

budget: str = Field(..., description="Total trip budget (e.g. $2000)")

user_preferences: str = Field(

default="", description="User travel preferences (injected at runtime)"

)

@app.tool()

@mesh.llm(

system_prompt="file://prompts/plan_trip.j2",

context_param="ctx",

provider={"capability": "llm", "tags": ["+claude"]},

filter=[

{"capability": "flight_search"},

{"capability": "hotel_search"},

{"capability": "weather_forecast"},

{"capability": "poi_search"},

],

filter_mode="all",

max_iterations=10,

)

@mesh.tool(

capability="trip_planning",

description="Generate a trip itinerary using an LLM with real travel data",

tags=["planner", "travel", "llm"],

dependencies=["user_preferences", "chat_history"],

)

async def plan_trip(

destination: str,

dates: str,

budget: str,

message: str = "",

session_id: str = "",

conversation_history: list[dict] = [],

user_prefs: mesh.McpMeshTool = None,

chat_history: mesh.McpMeshTool = None,

ctx: TripRequest = None,

llm: mesh.MeshLlmAgent = None,

) -> str:

"""Plan a trip given a destination, dates, and budget."""

# Tier-1: prefetch user preferences before the LLM call

prefs = {}

if user_prefs:

prefs = await user_prefs(user_id="demo-user")

# Inject preferences into the context so the Jinja template can use them

prefs_summary = (

f"Preferred airlines: {', '.join(prefs.get('preferred_airlines', []))}. "

f"Budget limit: ${prefs.get('budget_usd', 'flexible')}. "

f"Interests: {', '.join(prefs.get('interests', []))}. "

f"Minimum hotel stars: {prefs.get('hotel_min_stars', 'any')}."

if prefs

else "No user preferences available."

)

# Tier-1: fetch chat history if a session is active

history = []

if session_id and chat_history:

history = await chat_history.call_tool("get_history", {

"session_id": session_id,

"limit": 20,

})

if isinstance(history, dict):

history = history.get("result", [])

# Build the message for the LLM. When history is present,

# pass the full turn list so the LLM sees prior context.

user_text = message or (

f"Plan a trip to {destination} from {dates} with a budget of {budget}."

)

if history:

messages = list(history)

messages.append({"role": "user", "content": user_text})

result = await llm(

messages,

context={"user_preferences": prefs_summary},

)

else:

result = await llm(

user_text,

context={"user_preferences": prefs_summary},

)

# Save turns to chat history so the next request sees them

if session_id and chat_history:

await chat_history.call_tool("save_turn", {

"session_id": session_id,

"role": "user",

"content": user_text,

})

response_text = result if isinstance(result, str) else str(result)

await chat_history.call_tool("save_turn", {

"session_id": session_id,

"role": "assistant",

"content": response_text,

})

return result

@mesh.agent(

name="planner-agent",

version="1.0.0",

description="TripPlanner LLM planner with tool access (Day 6)",

http_port=9107,

enable_http=True,

auto_run=True,

)

class PlannerAgent:

pass

Dependency declaration¶

The @mesh.tool decorator now declares two dependencies instead of one:

Both user_preferences and chat_history are tier-1 dependencies -- resolved before the tool function runs. The planner calls chat_history.call_tool("get_history", {...}) and chat_history.call_tool("save_turn", {...}) because the chat_history capability exposes two tools. For user_prefs, the single-tool shorthand (await user_prefs(...)) still works.

History fetch¶

Before the LLM call, the planner fetches the conversation history for the current session:

# Tier-1: fetch chat history if a session is active

history = []

if session_id and chat_history:

history = await chat_history.call_tool("get_history", {

"session_id": session_id,

"limit": 20,

})

if isinstance(history, dict):

history = history.get("result", [])

Multi-turn messages¶

When history is present, the planner passes the full message list to the LLM instead of a single string:

# Build the message for the LLM. When history is present,

# pass the full turn list so the LLM sees prior context.

user_text = message or (

f"Plan a trip to {destination} from {dates} with a budget of {budget}."

)

if history:

messages = list(history)

messages.append({"role": "user", "content": user_text})

result = await llm(

messages,

context={"user_preferences": prefs_summary},

)

else:

result = await llm(

user_text,

context={"user_preferences": prefs_summary},

)

The @mesh.llm decorator handles multi-turn natively -- pass a list of {"role": "...", "content": "..."} dicts as the first argument to llm() and the decorator builds the correct LLM API call. The system prompt from the Jinja2 template is inserted automatically.

History save¶

After the LLM responds, the planner saves both the user turn and the assistant turn so the next request sees them:

# Save turns to chat history so the next request sees them

if session_id and chat_history:

await chat_history.call_tool("save_turn", {

"session_id": session_id,

"role": "user",

"content": user_text,

})

response_text = result if isinstance(result, str) else str(result)

await chat_history.call_tool("save_turn", {

"session_id": session_id,

"role": "assistant",

"content": response_text,

})

Part 3: Update the gateway¶

The gateway gains a session_id parameter. Everything else stays the same -- one dependency, five lines of code.

import uuid

import mesh

from fastapi import FastAPI, Request

from mesh.types import McpMeshTool

app = FastAPI(title="Trip Planner Gateway", version="2.0.0")

@app.get("/health")

async def health():

"""Health check endpoint."""

return {"status": "healthy"}

@app.post("/plan")

@mesh.route(dependencies=["trip_planning"])

async def plan_trip(request: Request, plan_trip: McpMeshTool = None):

"""Bridge HTTP to the mesh planner with session tracking."""

body = await request.json()

if not plan_trip:

return {"error": "trip_planning capability unavailable"}

session_id = request.headers.get("X-Session-Id") or str(uuid.uuid4())

result = await plan_trip(

destination=body["destination"],

dates=body["dates"],

budget=body["budget"],

message=body.get("message", ""),

session_id=session_id,

)

return {"result": result, "session_id": session_id}

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8080, log_level="info")

Session ID¶

If the client sends X-Session-Id, the gateway uses it. Otherwise it generates a UUID and returns it in the response so the client can use it for follow-up calls. The gateway passes session_id to the planner alongside the trip parameters -- the planner handles the rest.

Start and test¶

Install redis-py¶

If redis is not already in your venv:

Start the chat history agent¶

Your nine agents from Day 5 should still be running. Add chat-history-agent:

If you are starting fresh, launch everything at once:

$ meshctl start --dte --debug -d -w \

chat-history-agent/main.py \

claude-provider/main.py \

openai-provider/main.py \

flight-agent/main.py \

hotel-agent/main.py \

weather-agent/main.py \

poi-agent/main.py \

user-prefs-agent/main.py \

planner-agent/main.py \

gateway/main.py

Check the mesh:

Registry: running (http://localhost:8000) - 10 healthy

NAME RUNTIME TYPE STATUS DEPS ENDPOINT AGE LAST SEEN

chat-history-agent-3f2a1b9c Python Agent healthy 0/0 10.0.0.74:9109 8s 2s

claude-provider-0a89e8c6 Python Agent healthy 0/0 10.0.0.74:49486 15m 2s

flight-agent-a939da4b Python Agent healthy 1/1 10.0.0.74:49480 15m 2s

gateway-7b3f2e91 Python API healthy 1/1 10.0.0.74:8080 5m 2s

hotel-agent-9932ac09 Python Agent healthy 0/0 10.0.0.74:49482 15m 2s

openai-provider-40a5c637 Python Agent healthy 0/0 10.0.0.74:49485 15m 2s

planner-agent-fb07b918 Python Agent healthy 2/2 10.0.0.74:49484 15m 2s

poi-agent-97bd9fcc Python Agent healthy 1/1 10.0.0.74:49481 15m 2s

user-prefs-agent-87506c4a Python Agent healthy 0/0 10.0.0.74:49479 15m 2s

weather-agent-a6f7ea5e Python Agent healthy 0/0 10.0.0.74:49483 15m 2s

Ten agents. The gateway shows 1/1 dependency -- just trip_planning. The planner shows 2/2 dependencies -- it resolved both user_preferences and chat_history.

List the tools:

TOOL AGENT CAPABILITY TAGS

-----------------------------------------------------------------------------------------------

claude_provider claude-provider-0a89e8c6 llm claude

flight_search flight-agent-a939da4b flight_search flights,travel

get_history chat-history-agent-3f2a1b9c chat_history chat,history,state

get_user_prefs user-prefs-agent-87506c4a user_preferences preferences,travel

get_weather weather-agent-a6f7ea5e weather_forecast weather,travel

hotel_search hotel-agent-9932ac09 hotel_search hotels,travel

openai_provider openai-provider-40a5c637 llm openai,gpt

plan_trip planner-agent-fb07b918 trip_planning planner,travel,llm

save_turn chat-history-agent-3f2a1b9c chat_history chat,history,state

search_pois poi-agent-97bd9fcc poi_search poi,travel

10 tool(s) found

Two new tools: save_turn and get_history, both from chat-history-agent.

Multi-turn demo¶

Turn 1 -- plan a trip:

$ curl -s -X POST http://localhost:8080/plan \

-H "Content-Type: application/json" \

-H "X-Session-Id: test-session-1" \

-d '{"destination":"Kyoto","dates":"June 1-5, 2026","budget":"$2000"}'

{

"result": "## Kyoto Trip Itinerary: June 1-5, 2026\n\n**Budget: $2,000**\n\n### Day 1 (June 1) - Arrival & Eastern Kyoto\n\n**Morning:**\n- Arrive via SQ017 ($901) — preferred airline per your preferences\n- Check into Sakura Inn ($95/night, 3-star) — meets your minimum star rating\n\n**Afternoon:**\n- Visit Fushimi Inari Shrine (cultural — matches your interests)\n...",

"session_id": "test-session-1"

}

Turn 2 -- iterate on the plan:

$ curl -s -X POST http://localhost:8080/plan \

-H "Content-Type: application/json" \

-H "X-Session-Id: test-session-1" \

-d '{"destination":"Kyoto","dates":"June 1-5, 2026","budget":"$1500","message":"Can you make it cheaper? I want to stay under $1500."}'

{

"result": "## Revised Kyoto Itinerary: June 1-5, 2026\n\n**Budget: $1,500** (revised from $2,000)\n\n### Changes from Previous Plan\n- Switched to MH007 ($842, saving $59) — still a preferred airline\n- Downgraded to Capsule Stay ($45/night, saving $200 over 4 nights)\n- Replaced paid attractions with free alternatives\n\n### Day 1 (June 1) - Arrival\n...",

"session_id": "test-session-1"

}

The second response references the first plan -- it knows about the previous hotel choice, the original budget, and the itinerary structure. This is the conversation history at work: the planner fetched the prior turns from Redis, passed them to the LLM as a multi-turn message list, and the LLM responded with awareness of the full dialogue.

Turn 3 -- ask a question:

$ curl -s -X POST http://localhost:8080/plan \

-H "Content-Type: application/json" \

-H "X-Session-Id: test-session-1" \

-d '{"destination":"Kyoto","dates":"June 1-5, 2026","budget":"$1500","message":"What if I skip the flight and take the Shinkansen from Tokyo instead?"}'

The planner sees all three turns and adjusts accordingly. Each turn adds to the Redis list, and the next request reads the full history.

Part 4: Walk the trace¶

Open the mesh UI to view the trace:

Navigate to http://localhost:3080 and click the most recent trace. The call tree shows the planner's orchestration -- history fetch and save happen inside the planner, not the gateway:

└─ plan_trip (planner-agent) [18542ms] ✓

├─ get_history (chat-history-agent) [2ms] ✓

├─ get_user_prefs (user-prefs-agent) [1ms] ✓

├─ claude_provider (claude-provider) [18451ms] ✓

│ ├─ flight_search (flight-agent) [14ms] ✓

│ │ └─ get_user_prefs (user-prefs-agent) [0ms] ✓

│ ├─ hotel_search (hotel-agent) [1ms] ✓

│ ├─ get_weather (weather-agent) [0ms] ✓

│ └─ search_pois (poi-agent) [21ms] ✓

│ └─ get_weather (weather-agent) [0ms] ✓

├─ save_turn (chat-history-agent) [1ms] ✓

└─ save_turn (chat-history-agent) [1ms] ✓

The flow reads top to bottom: fetch history (2ms), prefetch user preferences (1ms), run the LLM (18s, most of which is the LLM reasoning loop), save the user message (1ms), save the assistant response (1ms). The chat history calls add negligible overhead -- Redis round-trips are sub-millisecond.

Stateful concerns are just agents

Redis-backed chat history, user profiles, booking state, audit logs -- they are all the same pattern: a mesh tool agent wrapping a data store. mesh does not need a special primitive for each one. The general abstraction -- any MCP tool anywhere is a local function call -- handles them all. Want to swap Redis for Postgres? Edit one agent. Want to add message encryption? Extend one agent. The gateway and planner do not change.

Leave it running¶

Your ten agents are running in watch mode. On Day 7 you will add a committee of specialists. No need to stop between chapters.

Troubleshooting¶

Redis connection refused. The chat-history-agent connects to Redis on localhost:6379. Make sure the observability stack is running:

Check Redis is healthy:

History not persisting across calls. Verify you are sending the same X-Session-Id header in both requests. If the header is missing, the gateway generates a new UUID for each call -- each turn gets its own session with no shared history. Check the session_id field in the response.

Second turn does not reference the first. Three things to check:

- The

chat_historydependency resolved:meshctl listshould show the planner with2/2deps. - Redis contains the turns:

redis-cli LRANGE chat:test-session-1 0 -1should show the saved JSON. - The planner received the history: check the trace for

get_historyreturning a non-empty list. If the planner'smax_iterationsis too low, the LLM may not fully process the history before hitting the iteration cap.

ModuleNotFoundError: No module named 'redis'. Install redis-py in your venv:

Recap¶

You added multi-turn chat history to the trip planner by building one new agent and updating two existing ones. The chat-history-agent wraps Redis with two tools (save_turn, get_history). The planner owns the full chat lifecycle -- it fetches history before the LLM call and saves turns after. The gateway stays thin: one dependency, session ID passthrough. No framework changes, no special chat primitives -- just another mesh tool agent wired through dependency injection.

See also¶

meshctl man decorators-- the@mesh.tooland@mesh.routedecorator referencemeshctl man dependency-injection-- how DI resolves multi-tool capabilitiesmeshctl man llm-- multi-turn message format forllm()calls

Next up¶

Day 7 adds a committee of specialists -- three LLM agents (budget analyst, adventure advisor, logistics planner) that the planner consults in parallel before producing the final itinerary.